PredPol software used in Santa Cruz, CA

Recently, I’ve been writing about software used in surveillance and policing practices. One such practice, called predictive policing, uses software packages to analyze spatial crime data and predict where and when future crimes are likely to happen. Police departments can then use this information to decide where to deploy officers. This practice has received some mainstream press in recent years, with many pointing out its similarities with the Pre-crime division in the 2002 film Minority Report. Coincidently, the last three cities I’ve called home–Santa Cruz, Oakland, and Madison–have all used predictive policing software.

One popular package, PredPol, use principles from earthquake aftershock modeling software. Others are sometimes compared to analytic software used to create targeted ads through the analysis of customer shopping data. Of course, these software packages are proprietary, making it difficult to look under the hood to see how they really work. But their use of existing crime data should be a cause for alarm, especially in places with disparities in policing.

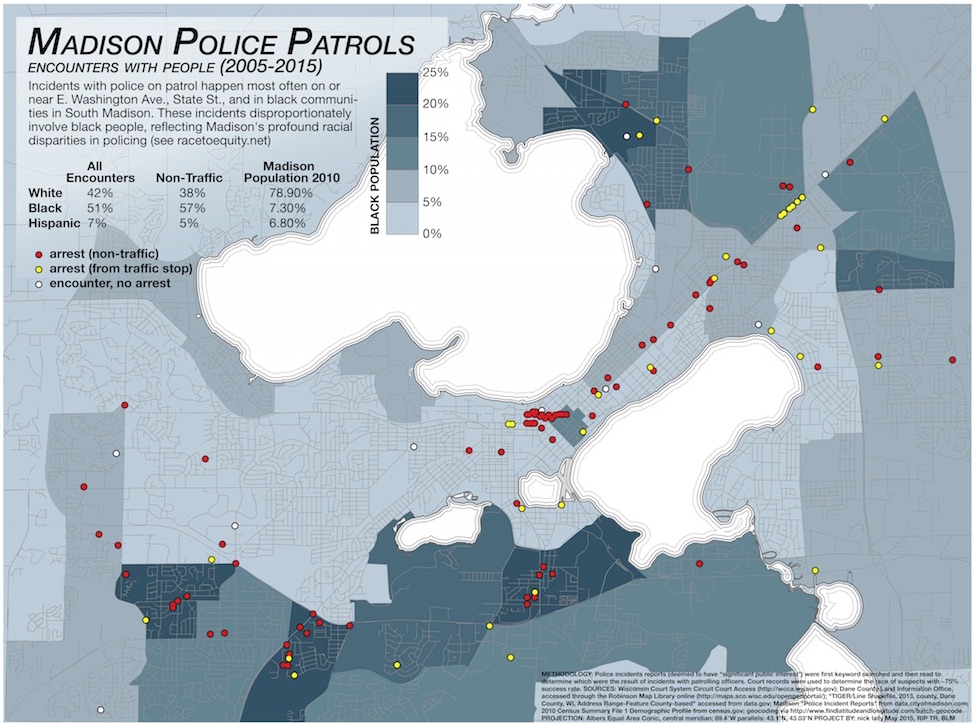

A news article from the Wisconsin State journal last year indicates that crime analysts in Madison are using predictive policing software, although the details are vague and there isn’t much documentation that I could dig up. But in a city with well-documented and profound racial disparities in policing, we can only guess that this will reinforce those practices.

As Ingrid Burrington writes in a Nation article:

All of these applications assume the credibility of the underlying crime data—and the policing methods that generate that data in the first place. As countless scandals over quotas in police departments demonstrate, that is a huge assumption.

She observes:

It’s easy to imagine how biased data could render the criminal-justice system even more of a black box for due process, replacing racist cops with racist algorithms.

The adoption of software solutions for policing, whether implicitly or explicitly, often contain the hope of bypassing the problem of structural racism in policing. But these software packages can only reinforce those racist assumptions if they rely on datasets constructed through policing practices. Madison Police Chief Koval rejects claims that his department is responsible for racial disparities in policing, deferring blame onto the larger community:

“On any given month, more than 98 percent of our calls for service are activated through the 9-1-1 Center,” he said in a statement. “Upon arrival, our officers are required by law to evaluate the behavior that is manifesting to see if it reaches legal thresholds required to ticket and/or arrest.”

But, as I argued in an earlier post, the practices of patrolling officers seem to reflect those same disparities (although, the role of community members in perpetuating racism should also be taken seriously). This can only lead to a situation where the algorithms merely reflect and hone actually existing ideas and spatial imaginaries about who commits crimes and where. And I don’t doubt that these systems will produce arrest statistics to back up their claims, but whose interests are they serving?

As Ellen Huet observes in Forbes:

Police departments pay around $10,000 to $150,000 a year to gain access to these red boxes, having heard that other departments that do so have seen double-digit drops in crime. It’s impossible to know if PredPol prevents crime, since crime rates fluctuate, or to know the details of the software’s black-box algorithm, but budget-strapped police chiefs don’t care. Santa Cruz saw burglaries drop by 11% and robberies by 27% in the first year of using the software. “I’m not really concerned about the formulas,” said Atlanta Police Chief George Turner, who implemented the software in July 2013. “That’s not my business. My business is to fight crime in my city.”

I think it’s time to be concerned.