Archive for the 'policing' Category

In this paper, we examine the relationship between the digital and geography. Our analysis provides an overview of the rich scholarship that has examined: (1) geographies of the digital, (2) geographies produced by the digital, and (3) geographies produced through the digital. Using this material we reflect on two questions: has there been a digital turn in geography? and, would it be productive to delimit ‘digital geography’ as a field of study within the discipline, as has recently occurred with the attempt to establish ‘digital anthropology’ and ‘digital sociology’? We argue that while there has been a digital turn across geographical sub-disciplines, the digital is now so pervasive in mediating the production of space and in producing geographic knowledge that it makes little sense to delimit digital geography as a distinct field. Instead, we believe it is more productive to think about how the digital reshapes many geographies.

You can download a copy on the Social Science Research Network page.

Also of interest is the new issue of Surveillance & Society–a double issue with a theme of “Surveillance Asymmetries and Ambiguities.” While I haven’t read it through yet, many of the abstracts sound very promising as scholars attempt to complicate understandings of power relations, especially in relation to computational surveillance practices.

screenshot from video demo: https://www.youtube.com/watch?v=Xz_P9CXYpmA

Hitachi Data Systems, in a recent announcement, has upped the ante in the predictive policing game with the introduction of Visualization Predictive Crime Analytics (PCA) into their Visualization Suite. They claim:

PCA is the first tool of its kind to use real-time social media and Internet data feeds together with unique, sophisticated analytics to gather intelligent insight and enhance public safety through the delivery of highly accurate crime predictions.

The press release explicitly connects the technology to “smart cities,” claiming the suite will “help public and private entities accelerate extraction of rich, actionable insights from all of their data sources.” The use of the word “actionable” here is interesting and reminds me of Louise Amoore’s great article, “Data Derivatives: On the Emergence of a Security Risk Calculus for Our Times” from 2011. The data derivative, in Amoore’s articles, is the “flag, map or score” that is inferred from large data sets like those used in Hitachi’s PCA system. She argues:

The pre-emptive deployment of a data derivative does not seek to predict the future, as in systems of pattern recognition that track forward from past data, for example, because it is precisely indifferent to whether a particular event occurs or not. What matters instead is the capacity to act in the face of uncertainty, to render data actionable.

The whole project seems wildly ambitious, with unknown statistical models, machine learning algorithms, and natural language processing routines holding it all together. More from the press release:

Hitachi Visualization Suite (HVS) is a hybrid cloud-based platform that integrates disparate data and video assets from public safety systems—911 computer-aided dispatch, license plate readers, gunshot sensors, and so on—in real time and presents them geospatially. HVS provides law enforcement with critical insight to improve intelligence, enhance investigative capabilities and increase operational efficiencies. Along with capturing real-time event data from sensors, HVS now offers the ability to provide geospatial visualizations for historical crime data in several forms, including heat maps. This feature is available in the Hitachi Visualization Predictive Crime Analytics (PCA) add-on module of the new Hitachi Visualization Suite 4.5 software release.

Blending real-time event data captured from public safety systems and sensors with historical and contextual crime data from record management systems, social media and other sources, PCA’s powerful spatial and temporal prediction algorithms help law enforcement and first responder teams assign threat levels for every city block. The algorithms can also be used to create threat level predictions to accurately forecast where crimes are likely to occur or additional resources are likely to be needed. PCA is unique in that it provides users with a better understanding of the underlying risk factors that generate or mitigate crime. It is the first predictive policing tool that uses natural language processing for topic intensity modeling using social media networks together with other public and private data feeds in real time to deliver highly accurate crime predictions.

from: https://www.hds.com/assets/pdf/hitachi-social-innovation-and-visualization-solutions-infographic.pdf

PredPol software used in Santa Cruz, CA

Recently, I’ve been writing about software used in surveillance and policing practices. One such practice, called predictive policing, uses software packages to analyze spatial crime data and predict where and when future crimes are likely to happen. Police departments can then use this information to decide where to deploy officers. This practice has received some mainstream press in recent years, with many pointing out its similarities with the Pre-crime division in the 2002 film Minority Report. Coincidently, the last three cities I’ve called home–Santa Cruz, Oakland, and Madison–have all used predictive policing software.

One popular package, PredPol, use principles from earthquake aftershock modeling software. Others are sometimes compared to analytic software used to create targeted ads through the analysis of customer shopping data. Of course, these software packages are proprietary, making it difficult to look under the hood to see how they really work. But their use of existing crime data should be a cause for alarm, especially in places with disparities in policing.

A news article from the Wisconsin State journal last year indicates that crime analysts in Madison are using predictive policing software, although the details are vague and there isn’t much documentation that I could dig up. But in a city with well-documented and profound racial disparities in policing, we can only guess that this will reinforce those practices.

from the Race to Equity Report

from the Race to Equity Report

As Ingrid Burrington writes in a Nation article:

All of these applications assume the credibility of the underlying crime data—and the policing methods that generate that data in the first place. As countless scandals over quotas in police departments demonstrate, that is a huge assumption.

She observes:

It’s easy to imagine how biased data could render the criminal-justice system even more of a black box for due process, replacing racist cops with racist algorithms.

The adoption of software solutions for policing, whether implicitly or explicitly, often contain the hope of bypassing the problem of structural racism in policing. But these software packages can only reinforce those racist assumptions if they rely on datasets constructed through policing practices. Madison Police Chief Koval rejects claims that his department is responsible for racial disparities in policing, deferring blame onto the larger community:

“On any given month, more than 98 percent of our calls for service are activated through the 9-1-1 Center,” he said in a statement. “Upon arrival, our officers are required by law to evaluate the behavior that is manifesting to see if it reaches legal thresholds required to ticket and/or arrest.”

But, as I argued in an earlier post, the practices of patrolling officers seem to reflect those same disparities (although, the role of community members in perpetuating racism should also be taken seriously). This can only lead to a situation where the algorithms merely reflect and hone actually existing ideas and spatial imaginaries about who commits crimes and where. And I don’t doubt that these systems will produce arrest statistics to back up their claims, but whose interests are they serving?

As Ellen Huet observes in Forbes:

Police departments pay around $10,000 to $150,000 a year to gain access to these red boxes, having heard that other departments that do so have seen double-digit drops in crime. It’s impossible to know if PredPol prevents crime, since crime rates fluctuate, or to know the details of the software’s black-box algorithm, but budget-strapped police chiefs don’t care. Santa Cruz saw burglaries drop by 11% and robberies by 27% in the first year of using the software. “I’m not really concerned about the formulas,” said Atlanta Police Chief George Turner, who implemented the software in July 2013. “That’s not my business. My business is to fight crime in my city.”

I think it’s time to be concerned.

In an open letter to the Madison Police Chief, YGB recognizes the role of policing practices in producing these disparities, citing reports that show Black people in the county are eight times more likely to be arrested than whites (this number is probably closer to eleven in the city of Madison). In response, they call for self-determination and an end to interactions with the police. In his patronizing response, Madison Police Chief Koval instead vows to increase police presence in neighborhoods of color, denying the role of policing practices in producing and/or upholding the city’s longstanding disparities. Similarly, Mayor Soglin has dismissed such critiques, saying racial bias in policing is “the wrong question to be asked,” instead deferring blame onto “the entire criminal justice system.”

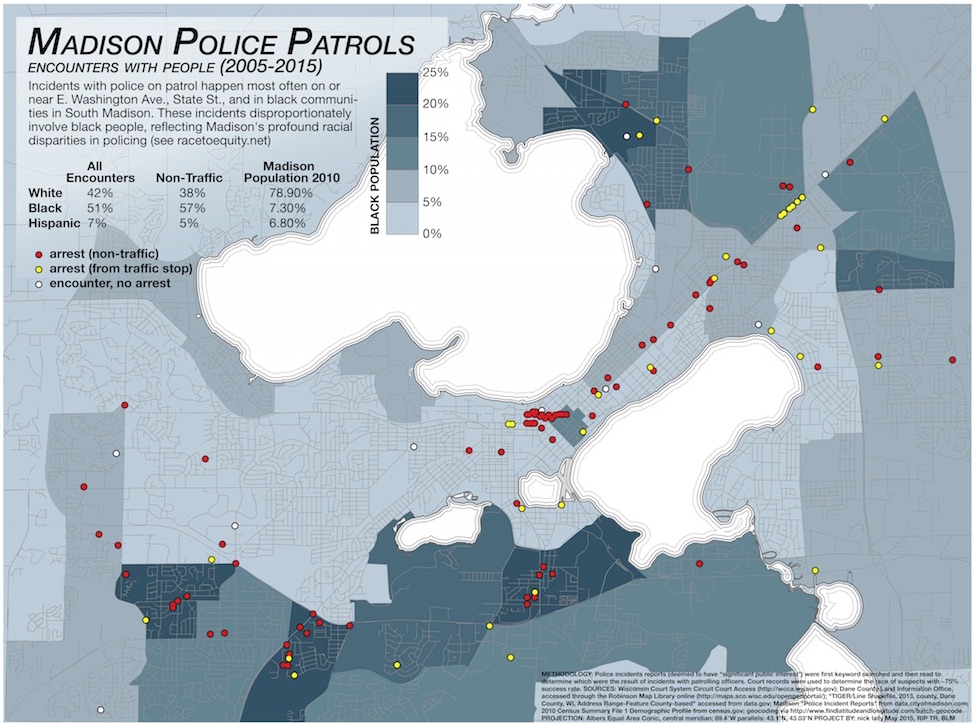

With these divergent views on policing in mind, I began searching through police incident reports to see if they would reveal spatial or racial patterns of policing. I was particularly interested in revealing police patrol patterns to substantiate claims made by YGB and others that communities of color are over-policed. Patrol patterns are not made public by the city, but as others have observed, the presence of police in affluent Madison neighborhoods is minimal. To gather the data, I first keyword searched and then read police incident reports to determine incidents that happened while an officer was on patrol, not precipitated by a service call. I then searched court records to determine the race of those arrested (only cases that went to trial showed up on this search, which accounted for about 75% of incidents) and mapped the results. I found that arrests were clustered on the busy East Washington Avenue that traverses the isthmus, the bar and restaurant-filled State Street that connects campus with the Capitol building, and three communities in South Madison with high Black populations. I also found profound racial disparities in who was targeted and arrested in patrol stops, mirroring the findings in the Race to Equity report.

Of course, there are limitations to this map. First, it is based on a limited amount of data, in large part because incident reports do not necessarily indicate when an officer was on patrol. Only through keyword searches and close readings was I able to build this database. Second, incidents only enter the city database if they are deemed to have “significant public interest.” The criteria for this categorization, as far as I am aware, is not made explicit by the city. Third, many incidents involved multiple people, which is not represented on this map. And last, I have not yet attempted to map the spatial distribution of the race of those involved, which may reveal other patterns. Despite these limitations, the map does reveal patterns that substantiate claims of uneven policing across the city.